Dell Networking Summit 2012 & Dell Fabric Manager

Last week Dell gathered over 500 Dell Networking sales reps and engineers worldwide all together in Austin for an intense 3-day training summit – named “Dell Networking Summit 2012”. By any measure this was a courageous investment for the company to make given the challenging economic times and the transition Dell itself is facing today with PC sales (affecting the stock price).

For those organizing the event and delivering content (me), the stakes couldn’t have been higher to deliver a successful event back to Dell. Just the soft costs of removing over 500 sales people from the field for a week must be in the millions – in addition to the obvious hard costs of flights and hotels. The fact that Dell made this investment, at this time, I think speaks volumes to how serious this company really is about Networking, and the role it intends to play as an end-to-end IT solutions provider. And best of all, the event concluded as a smashing success!

I was tasked with creating all of the content for and delivering one of the mandatory technical sessions: Distributed Core Fabric & Dell Fabric Manager. My partner in this session was Mahesh Natarajan (pictured left) who is the product manager for Dell Fabric Manager. Mahesh was a tremendous help in handling Q&A while I drove the demo. I also delivered one additional optional session: Dell solutions for OpenStack & Hadoop. Over 250 network sales engineers rotated through these classes over three days and nine sessions. The sessions were all absolutely fantastic. It couldn’t have gone any better.

First we covered the basic fundamental concepts of the Leaf/Spine fabric – the underpinnings of what Dell refers to as its “Distributed Core” architecture. We talked about terminology and switch roles. What is a Leaf? What is a Spine? How does a Leaf switch connect to the Spines? How does this determine the fabric scalability? How large of a fabric can we build for our customers using switches on the truck today, Z9000 and S4810? (Answer: pretty large) Note: Be sure to checkout the Clos Fabrics Explained webinar I did with Ivan Pepelnjak the week before.

Second, we discussed the common challenges customers are facing when designing and deploying data center fabrics. It doesn’t matter who you buy the fabric from. Be it Dell, Cisco, Juniper, Arista, Brocade, you name it, designing and deploying a data center fabric is not easy. How do you design the fabric you need? Who is the lucky soul that spends weeks in front of Visio and Excel documenting the fabric? Better hope they didn’t make any mistakes. Who is going to roll up their sleeves and pound away at the keyboard configuring a mundane Layer 3 configuration on each and every switch? Did they make any mistakes? I hope not. How long will it take to eventually find those mistakes? Who is going to make sure each switch is loaded with the proper software? Who is going to Validate that the entire fabric was constructed correctly; cabling, configurations, documentation, etc.? Who is going to re-validate the entire fabric, and re-document, every time the fabric grows and new switches are added?

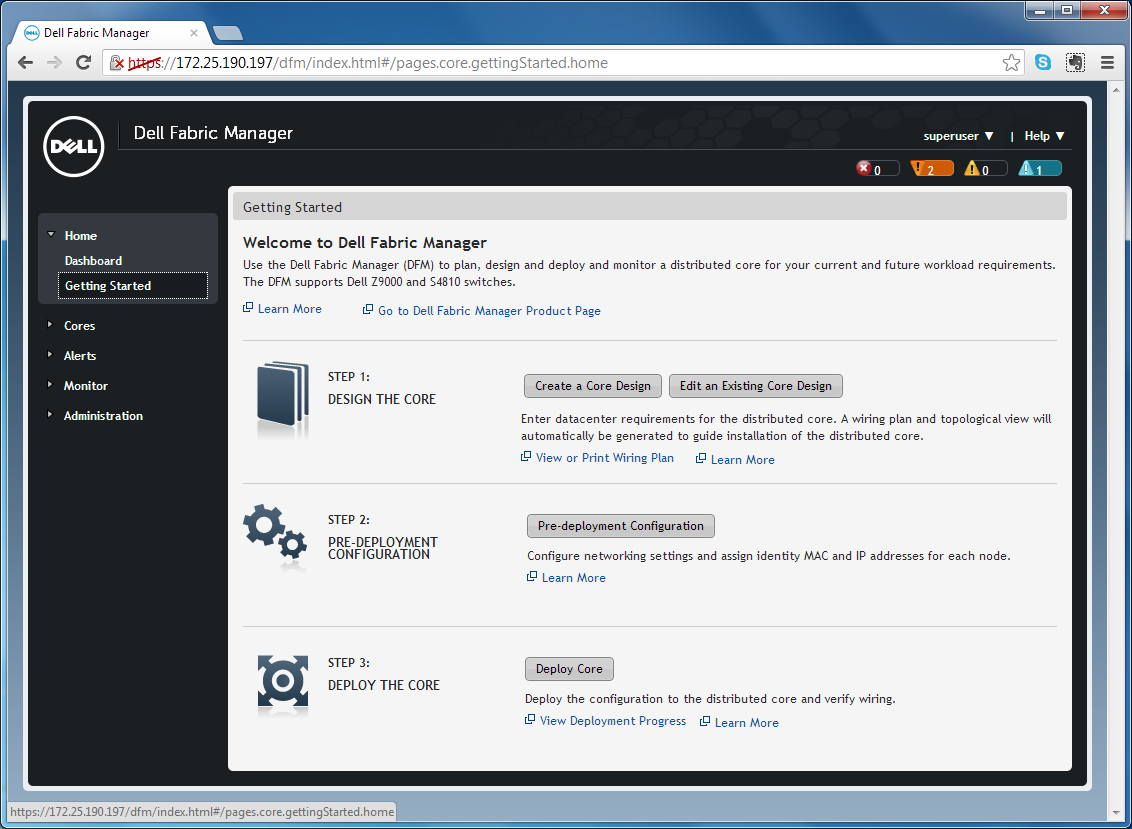

Third, we demonstrated Dell Fabric Manager (DFM). This is a software tool written by Dell (from scratch) that automates the deployment of the Leaf/Spine “Distributed Core” architecture. In the class, I deployed (from scratch) a Leaf/Spine fabric on live gear using DFM. I picked a fabric design from a template. I never once touched a switch CLI – I didn’t type a single OSPF command. DFM auto configured the fabric for me. I didn’t need to fire up Visio or Excel – the fabric wiring plan was auto documented for me within seconds (as a PDF file). I can take that PDF wiring plan and email it off to the facilities team right then and there. And best of all, the fabric deployment was auto validated afterwards within seconds – both the configuration and wiring of each switch. I also demonstrated that changing a switch configuration behind DFM’s back will be caught and exact changes identified because fabric validation is a continual recurring process, not just a one time event.

In a nutshell Dell Fabric Manager is an automation engine for data center fabrics built with standard proven Layer 3 protocols – no SDN fairies or OpenFlow pixie dust here (yet). This can be something that an admin interfaces with via a web browser, as I demonstrated in the class. But even more interesting, there’s nothing preventing this automation engine from being something that runs behind the scenes – in the background – under the hood – as a cog in a “machine” running a cloud solution.

More to come on DFM later …

Above is a pic of the real Dell Networking gear used in the class — located remotely in “Dell Solution Centers” (DSC) in Austin (and all over the world). The two Z9000 and six S4810 switches in the top half were used by my colleague John Beck to demonstrate the coming VLT (LAG) capabilities on the Z9000. The two Z9000 and four S4810 switches on the bottom half were wired in a Leaf/Spine topology for my class.

All in all, the sessions were great – but best of all – the event highlight for me was the level of engagement and enthusiasm shown by all of the attendees. It really showed that, not only is Dell the company serious about Networking, but the networking folks themselves are really serious about Networking. That (for me) was a very encouraging sight to see. Despite the challenges facing Dell in other areas of the business, the Enterprise business which includes Networking (and Severs and Storage) has the passion and products to make a real difference.

Can Dell move the needle in data center networking? I guess only time will tell for sure. But I’m optimistic and having fun because the key ingredients are there – Dell has good networking products, good networking software, an energized team, and the company is making the necessary investments. It’s not all perfect, but what ever is? The fun part is challenging the status quo with a bottom up point of view to data center networking – looking at clusters of servers & storage and the networking that best glues that solution together.

Cheers, Brad