Cisco Nexus 5000 announced Today

What is the new Cisco Nexus 5000 – The industry first switch to deliver unified server I/O, providing Fiber Channel and IP traffic over a single 10G Ethernet port to the server. Nexus 5000 delivers very low latency wire speed lossless Ethernet service to the server.

As you can see from the photo the Nexus 5000 does not have RJ45 ports, rather it utilizes SFP+ which can be populated by a SFP+ twinax copper cable to deliver 10GE copper down to the server. Why not RJ45 10GBASE-T?? Two major reasons: (Power and Latency)

- Power consumed (each end) by 10GBASE-T = ~8W

- Power consumed (each end) by SFP+ Coax = ~.1W

- Latency of 10GBASE-T = ~2.5us

- Latency of SFP+ Coax = ~.25us

You could also use SFP fiber to the server but of course at the disadvantage of cost. The SFP+ copper cable, on the other hand, is expected to be in the $100 or less range.

This will be the cable you can buy for downlink connectivity to the server. As you can see the SFP+ connector is soldered to the coax copper cable from the factory:

What is the downside of this SFP+ twinax copper cable?? – Max Distance = 10m

This means your SFP+ twinax copper will remain within the rack as it does not have the distance to travel throughout the data center.

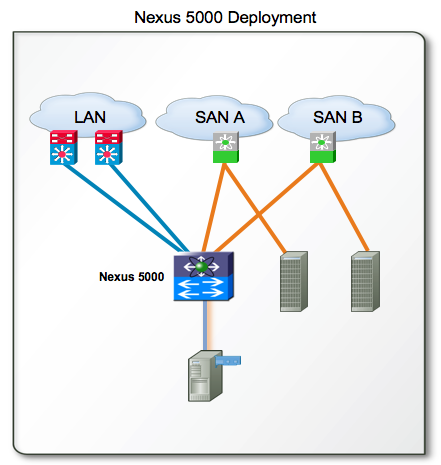

The Nexus 5000 is therefore a Top of Rack switch. You will then run fiber from your Nexus 5000 (in the top of the rack) to the 10GE Ethernet aggregation point (Nexus 7000) for traditional IP connectivity. You will then have fiber from your Nexus 5000 (top of rack) to your SAN fabric (MDS 9500).

What is the impact of this? — Is Top of Rack clearly the way to go now? Traditionally Cisco has never picked sides in the Top of Rack vs. End/Middle of Row debate in data center infrastructure cabling – we accommodate both implementations very nicely. However given that 10GE server connectivity appears to be going the SFP+ direction, does that mean we will start to encourage customers to give Top of Rack more consideration?

Or, will the large existing installed base of EndMiddle-of-Row Cat6 push Cisco to deliver a Nexus 5000/7000 with 10GBASE-T notwithstanding the power and latency issues that come with it?? Only time will tell.